“Any sufficiently advanced technology is indistinguishable from magic.” - Arthur C. Clarke.

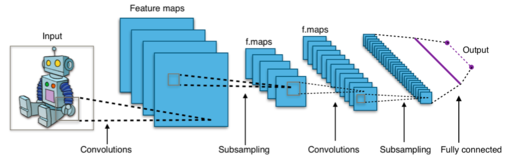

Well! Convolutional Neural Network (CNN) is such a technology. How it does, what it does is truly indistinguishable from magic. Read our earlier post - “From Cats to Convolutional Neural Networks”, to understand why CNNs come close to human intelligence. Although the inner workings of a CNN can be explained, the magic remains. Fascinated by CNNs, we thought of coming up with as many questions about CNNs to understand the mystery of why it is able to classify images or any kind of input so well.

- What is convolution?

- What is pooling?

- Which pooling function is preferred - Max or Average?

- What is the role of activation functions in CNN?

- Why is Relu prefered in CNN rather than Sigmoid?

- Why adding more layers increase the accuracy of the network?

- What is the intuition behind CNN?

- What is stride?

- Is it necessary to include zero-padding?

- What is parameter sharing, and why is it important?

- What would have happened if we would have not considered the pooling layer in CNN?

Why is pooling so important?

- What brings CNN closer to biological systems?

- How to decide on amount of training, test and validation data to be given to the network?

- What is cross-validation and why is it important?

- Which cross-validation technique is better - bootstrap or k-fold?

- When does a CNN fail?

- How can we know for certain that the network fails because of not providing adequate input or because it has less layers?

- What are the hidden layers doing?

- How does the backpropagation algorithm work across the network?

- Can one do continuous learning on CNN, or the training needs to be done first before conducting inference?

- Why are GPUs necessary to train a CNN?

- Why does using a pre-trained network increase the learning speed of new categories?

- When will we say a CNN is not able to learn?

- Why is it sufficient to only train the fully connected layer of a pre-trained network to train new categories.

- How important it is to provide right set of data to train a CNN?

- Can we use the features learned by the inside layers of a CNN?

- What is generalization?

- What is overfitting?

- Why is it important to apply distortions to input images to train an image classifier?

- What are hyper-parameters?

- What is an epoch?

- What decides the number of examples per epoch?

- What is gradient descent?

- What is a loss function?

- Why is cross-entropy the preferred cost function in CNN?

- Which one is better - Batch gradient descent or Stochastic gradient descent?

- What is the importance of learning rate in training a CNN?

- Which method is optimal - keeping the learning rate constant or changing it as the network becomes mature?

- How has CNN reduced the job of data scientists in terms of feature selection?

- Why starting the CNN’s training with random weights is preferable compared to starting it with zero weights?

- Why is Gaussian the preferred choice to choose random weights?

- How does regularization helps in preventing overfitting?

- How is a trained CNN evaluated?

- What is the importance of bias in training a CNN? Is it that significant in training a CNN?

- What are the best practices followed in CNNs?

- Why is training CNN a costly affair?

- Why can a CNN can be applied to any kind of learning, including images, Natural Language Processing and speech?

- Why is a CNN capable of computing any kind of function?

- How to tweak the number of convolutions and pooling functions in each layer?

- What does pre-processing in CNN means?

Hope we have covered most of the questions that justify the magic of Convolutional Neural Networks. If you have any more questions about CNNs, please feel free to add in the comments.

Comments

Post a Comment